A Face to Open Doors is an interactive installation in which your face determines your fate. Commissioned by IWM London for Refugee Season (2020–2021), the work invites visitors into a speculative immigration system where emotional expression becomes evidence.

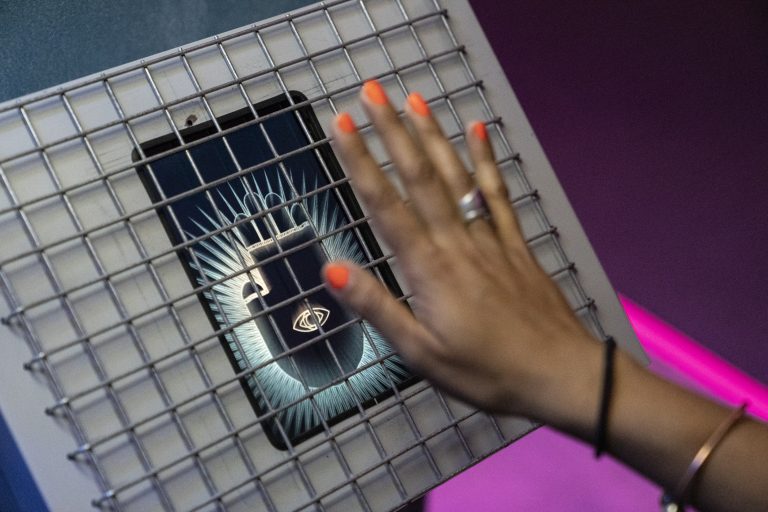

Inside a pop-up immigration booth — an artefact from a near-future society — you are assessed by an AI officer. As a series of videos play, the system tracks your facial responses and asks you to perform specific emotions to determine your credibility. Rather than answering questions, your face does the talking.

Smooth, efficient and quietly unsettling, the experience explores a system where judgement is automated, yet remains deeply human. Inspired by emerging technologies in border control, the work reflects on how bias, belief and emotion shape decisions that can carry life-or-death consequences.

“There simply is no evidence that machines are able to discern lies from truth because there is no direct nor predictable correlation between lying and any physiological phenomena. We cannot see lies in the body. These decisions remain social, even when technologies are being used. We must ask – ‘Who is the person making the decision and how?’ – even when there appears to be no person around.”

Dr Andy Balmer

University of Manchester

In asylum processes, decisions often come down to whether someone is believed. Evidence is rarely straightforward, and individuals must demonstrate a “credible” account of their experience under conditions of stress, trauma and cultural difference.

Small details can carry disproportionate weight. Avoiding eye contact, inconsistencies in memory, or the way emotion is expressed can all be interpreted as signs of deception. At the same time, expressing too much emotion — or too little — can equally undermine credibility.

This takes place within a system already defined by scale and complexity. From 2010-2020, Home Office officials have made more than 5,700 changes to immigration rules, which have more than doubled in length — from 145,000 to almost 375,000 words. At the same time, machine learning is beginning to enter this space. In 2014, trials were carried out at Bucharest Airport and on the US–Mexico border using a system called AVATAR (Automated Virtual Agent for Truth Assessments in Real-Time), which analyses facial movement, voice, body language and physiological signals to assess deception.

Other systems, such as the EU-funded iBorderCtrl project using Silent Talker technology, similarly attempt to identify deception through microgestures and behavioural signals.

A Face to Open Doors draws these conditions together. It places the participant within a system that appears efficient and objective, while revealing how fragile and subjective its foundations are.

Sources include Home Office immigration rule change records (2010–2020) and reporting on automated border control systems such as AVATAR (trials from 2014) and iBorderCtrl (EU pilot, 2016–2019).

May Abdalla

Amy Rose

Michael Golembewski

Jack Ratcliffe

Brendan Chitty & Ruth Shepherd

Cecilia Gonzalez Barragan & Ana Vilar

Tony Comley

Katerina Athanasopoulou

Chu-Li Shewring

Gemma Painton

Antonis Papamichael & Will Brady

Charlotte Northall & Jane Wheeler

Denis Kierans, Frances Webber, Professor Amina Memon, Dr Zoe Given-Wilson, Dr Andy Balmer, Amit Katwala, Theo Middleton